Recent Comments

Author Archives: P.T.R. Rupprecht

Layer-wise decorrelation in deep-layered artificial neuronal networks

The most commonly used deep networks are purely feed-forward nets. The input is passed to layers 1, 2, 3, then at some point to the final layer (which can be 10, 100 or even 1000 layers away from the input). … Continue reading

Posted in Data analysis, machine learning, Neuronal activity

Tagged CNN, Data analysis, deep learning, machine learning, Network analysis, Python

Leave a comment

Understanding style transfer

‘Style transfer’ is a method based on deep networks which extracts the style of a painting or picture in order to transfer it to a second picture. For example, the style of a butterfly image (left) is transferred to the … Continue reading

Posted in Data analysis, machine learning

Tagged deep learning, machine learning, Network analysis, Python

Leave a comment

Can two-photon scanning be too fast?

The following back-of-the-envelope calculations do not lead to any useful result, but you might be interested in reading through them if you want to get a better understanding of what happens during two-photon excitation microscopy. The basic idea of two-photon microscopy … Continue reading

The basis of feature spaces in deep networks

In a new article on Distill, Olah et al. write up a very readable and useful summary of methods to look into the black box of deep networks by feature visualization. I had already spent some time with this topic … Continue reading

Posted in machine learning, Network analysis, Neuronal activity

Tagged CNN, deep learning, machine learning, Network analysis, Python

2 Comments

All-optical entirely passive laser scanning with MHz rates

Is it possible to let a laser beam scan over an angle without moving any mechanical parts to deflect the beam? It is. One strategy is to use a very short-pulsed laser beam: A short pulse width means a finite … Continue reading

The most interesting machine learning AMAs on Reddit

It is very clear that Reddit is part of the rather wild zone of the internet. But especially for practical questions, Reddit can be very useful, and even more so for anything connected to the internet or computer technology, like machine … Continue reading

Posted in Data analysis, machine learning

Tagged deep learning, machine learning, Python, theoretical neuroscience

Leave a comment

How deconvolution of calcium data degrades with noise

How does the noisiness of the recorded calcium data affect the performance of spiking-inferring deconvolution algorithms? I cannot offer a rigorous treatment of this question (Update August 2020: Now I have treated this question rigorously.) , but some intuitive examples. … Continue reading

A convolutional network to deconvolve calcium traces, living in an embedding space of statistical properties

As mentioned before (here and here), the spikefinder competition was set up earlier this year to compare algorithms that infer spiking probabilities from calcium imaging data. Together with Stephan Gerhard, a PostDoc in our lab, I submitted an algorithm based on convolutional networks. Looking … Continue reading

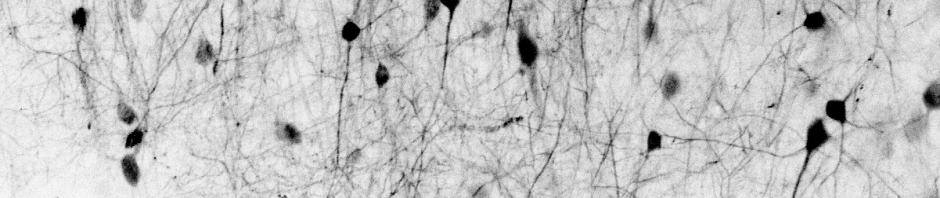

A short report from a Cold Spring Harbor lab course

One of the best things of being a PhD student is that one is supposed to learn new things. As part of this mission, I attended a two-week laboratory course in the Cold Spring Harbor Laboratories on ‘Advanced Techniques in … Continue reading

Whole-cell patch clamp, part 3: Limitations of quantitative whole-cell voltage clamp

Before I first dived into experimental neuroscience, I imagined whole-cell voltage clamp recordings to be the holy grail of precision. Directly listening to the currents that take place inside of a living neuron! How beautiful and precise, compared to poor-resolution techniques like fMRI or … Continue reading

Posted in Data analysis, electrophysiology, Neuronal activity, zebrafish

Tagged Data analysis, electrophysiology, zebrafish

8 Comments