During my physics studies, I got to know several mathematical tools that turned out to be extremely useful to describe the world and to analyze data, for example vector calculus, fourier analysis or differential equations. Another tool that I find particularly useful for my current work as a neuroscientist and which is, however, rarely mentioned explicitly are correlation functions. In the following, I will try to give an intuition of the power of correlation functions using a couple of examples.

.What are correlation functions?

To put it in very simple terms, a correlation coefficient measures how similar two signals ( and

) are after being normalized. Different from correlation coefficients, correlation functions are not single values, but functions of two input signals

and

. This can be a correlation function

of a time lag,

, or of a distance in space,

. The correlation function at a time lag or distance of zero, recovers the correlation coefficient,

, except for a normalizing factor.

The value of a correlation function at a given value of or

indicates how similar the two input signals

and

are when one of the signals is shifted in time by

or in space by

.

To make the result of this operation more clear, here are two simple examples, with signals (black) and

(gray) as noisy sine waves that are in phase (left) or out of phase (right):

While the cross-correlation function peaks at a time lag of for the synchronous case, the peak is shifted to

for the out-of-phase case. The value at a time lag of 0 is proportional to the correlation coefficient: a high value for the left side, a value close to zero for the right hand side. Also note that the correlation function used averaging over the full signal duration to get rid of the noise.

Computing the correlation function in Matlab or Python

Computing the correlation function is actually straightforward in Matlab or Python. Pay attention to subtract the mean of the two signals (e.g., B = B – mean(B) in Matlab or B = B – np.mean(B) in Python), because the functions expect normalized input.

Matlab:

A = rand(1000,1);

B = rand(1000,1);

C = xcorr(A,B,'unbiased');

Python:

import numpy as np

A = np.random.norm(0,1,1000)

B = np.random.norm(0,1,1000)

C = np.correlate(A,B,'full')

or

import scipy.signal as signal

C = signal.correlate(A,B)

It is a good but a bit tedious exercise to write one’s own cross correlation function in a basic programming language. Usually the normalization at some point can cause headaches.

1. Spatial correlation functions for image registration

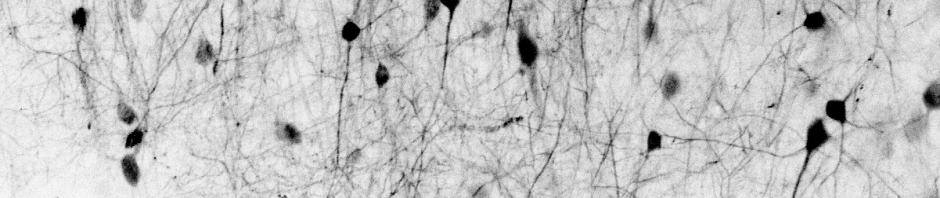

In microscopy, there’s often the problem to map two images onto each other. The following examples are two average images of the same brain region, recorded at different time points and therefore shifted meanwhile due to drift. I included a horizontal line for orientation:

To find out the drift, we can use correlation functions, measuring the similarity of the two images for all possible shifts, with the result that the shift in x-direction is 0 pixels, whereas the shift in y-direction is 4 pixels (here in Matlab):

movie_AVG1; % average image 1

movie_AVG2; % average image 2

result_conv = fftshift(real(ifft2(conj(fft2(movie_AVG1)).*fft2(movie_AVG2))));

[y,x] = find(result_conv == max(result_conv(:)));

shift_y = y - ( size(movie_AVG1,1)/2 + 1 )

shift_x = x - ( size(movie_AVG2,2)/2 + 1 )

Here, I calculated the correlation function using fast fourier transforms, taking advantage of a simple mathematical property of correlation functions. I could also have done the same computation with the built-in function xcorr2(movie_AVG1,movieAVG2) in Matlab, which is however much slower and requires subtraction of the respective mean from the images.

Similar algorithms are used for most image registration functions in ImageJ, Python or Matlab.

2. Local spatial correlation functions for particle image velocimetry

To go one step further, one can also compute a local instead of a global shift, for example if there are any deformations of the images that result in local deformation fields.

A more interesting application of the same principle of local deformations is a method referred to as particle image velocimetry (PIV), which has been developed in the field of experimental fluid mechanics. Using a sequence of images, correlation functions are used to extract local flow fields, as well as sinks and sources of the observed transport phenomenon. Here is, from work for my Diploma thesis, an example movie of a one-cell C. elegans embryo just before the first cell division, observed using DIC microscopy. I used the granular stuff in the cytoplasm to track the cytosolic flow patterns using PIV (with the toolbox PIVlab). The overlaid yellow arrows indicate the (wildly changing) direction of the local cytosolic flow field:

3. Temporal cross-correlation functions

One of most fascinating usages of cross-correlation functions for analysis of experimental data is for fluorescence cross-correlation spectroscopy (FCCS), or its more commonly used simpler version, fluorescence correlation spectroscopy (FCS), the latter of which is based on auto-correlation instead of cross-correlation functions.

Peri-stimulus time histograms (PSTHs) are a much more basic analysis tool that is commonly used by electrophysiologists to quantify the occurrence of a quantity triggered by certain events. Sometimes, events as ill-defined as the crests of an oscillatory signal are used as a trigger for a PSTH. Using correlation functions gets rid of this mess by measuring how much a quantity is affected depending on the quantitative history of the trigger signal.

In electrophysiologal work published in 2018, I used correlation functions to measure the phase relationship between an oscillatory local field potential (LFP) signal and an oscillatory component in a simultaneous whole-cell recording (for details, check out a part of figure 7 in the paper):

4. Autocorrelation functions for time series analysis

Auto-correlation functions are not only a tool for non-intuitive experimental methods like FCS, but also perfect to quantify periodicities in a time series. For example, if there is an oscillatory behavior in a swim pattern of a fish, in the firing of a neuron or in the spatial density of clouds, autocorrelations can easily quantify this periodicity.

Here is an example, again from an LFP recording. On the left, the signal seems clearly oscillatory, but how can we properly quantify the oscillatory period? We use an auto-correlation function, and the peak at around 40 ms in the plot on the right clearly indicates the oscillatory period (black arrow):

Correlation functions in physics

If you find the above examples interesting and want to understand what correlation functions can be used for, it could be a good idea to dive into physics, where correlation functions are all over the place:

- Fluorescence correlation spectroscopy and its many variations like FCCS or RICS

- The radial distribution function of crystals, liquids or a gas

- The divergence of the spatial correlation length during phase transitions

In addition, the mathematical aspects of correlation functions are quite rewarding to explore, for example the intimate relationship between auto-correlation functions and power spectra.

As another interesting use of auto-correlation functions, the fluctuation-dissipation theorem gives an idea how spontaneous fluctuations of a system close to thermodynamical equilibrium can predict the linear response of the system towards external perturbations. It’s a bit discouraging for biologists to understand that this theorem can hardly be applied to biological systems, which live far from thermodynamic equilibrium and which show responses that are rarely linear.

Still, it is amazing to see what physics can do with correlation functions and how powerful correlation functions are at extracting precise measurements from sometimes very noisy data.

Found your post very lightning. I have this weird impression that there are different names for the same things (e.g. cross correlation and correlation function) which turns things very confusing. Seems that people from physics name this operations as correlation functions and people from signal processing call it cross correlation. I wonder why there is such confusion? Discrete X continuous ? On top of that, I have seen researchers interchange nomenclature between Pearson correlations coefficients and cross correlation, and even name cross correlation operations as convolution operations (if I am not mistaken people studying Deep Learning and CNNs do this). Would you comment on that?

Hi – thanks for your comment! I think the nomenclature is confusing not only for you.

First, Pearson’s correlation coefficient vs. cross-correlation. The difference between correlation coefficient and cross-correlation is that the latter is also computed for shifts/delays, resulting in a function instead of a single data point. The “Pearson” correlation coefficient is a special correlation coefficient metric defined by its normalization (division by the standard deviations of the two signals). It is also possible to calculate a correlation function and use the same or a very similar normalization procedure. However, it would be misleading to call this normalized correlation function a Pearson’s correlation function. Mixing up “Pearson’s” with cross correlation is therefore not a good idea, I would not recommend it.

Second, correlation functions vs. cross-correlation. These two terms can be used almost always interchangeably, and it does not have to do with discrete vs. continuous (both are thought of as continuous, but can be discretized). Cross-correlation, as you pointed out, is typically used in signal processing and always means the cross-correlation between two signals. On the other hand, correlation functions in physics are a more general concept that can involve not only two, but three or more signals. For modern physics, these are important, although not very intuitive tools. Anyway, if you speak of a correlation function computing with two signals, you can also call it a cross-correlation.

Third, cross-correlation vs. convolution. The difference in mathematical terms is actually quite minor (more or less only a changed minus sign). The difference is more the purpose of the operation. For cross-correlation, it is to see for which shifts the two input signals are similar or not. For convolutions, the typical purpose is to treat a larger signal (e.g., an image) with a smaller filter in order to enhance or decrease features of the original signal (e.g., blurring with a Gaussian filter, or edge detection). I would therefore tend to call the operators used in CNNs convolutions, irrespective of the sign conventions used. However, technically, it would be fine to call them cross-correlations.

Finally, there is no general agreement on how to normalize correlation functions or cross-correlations (whereas convolutions are always thought of as not normalized). Depending on whom you speak with, they will expect cross-correlations or correlation functions to be normalized in a very specific way. This can lead to some confusion across fields, and good to keep in mind if your working at such an interface.

Hope that helps!

Thanks for you answer. Again, very lightning.

Thanks! You’re welcome.

Pingback: Self-supervised denoising of calcium imaging data | A blog about neurophysiology

Pingback: There is no recipe for discoveries | A blog about neurophysiology