I was surprised to find a method like DCM in Olav Stetter’s list (link) for neural network methods (even as a so-called ‘standard method’), because it differs from those I discussed before. I will now describe why I don’t think that this method is my method of choice for analyzing activity data and for understanding neuronal networks.

I refer to the scholarpedia article by Andre Marreiros for an easy-to-read introduction (link). It is easy to understand, although, in my opinion, it lacks the clarity of some better scholarpedia articles. – The method of DCM consists of two/three steps:

- defining a model of what is happening and what is being measured, using coupled differential equations

- deciding which model describes best the data, using bayesian methods

- fitting the remaining model parameters to the data

Basically, I like the idea of making a model to describe a specific process, compared to the approach which is purely analysis-driven like transfer entropy (link to my related post), correlation analysis (link), granger causality (link) or mutual information (link).

But here, the model consists of simple differential equations:

is a vector with neuronal activities (not on the single neuron level),

is an external input (like a visual cue for an animal),

,

and

are some kind of linear connectivity matrizes.

If we use a set of equations like this with a static external input , the neuronal activity will reach a steady state at some point (linear systems like this always do that). If the input is changed, the system will try to reach it’s newly-defined next steady state. Maybe this could be defined as a steady state-following system. This might contribute to the analysis of the propagation of ‘bulk information’, e.g. in the visual pathway when light is switched on and off. But, in my opinion, the part of information processing and neuronal activity which is not simply a steady state-following system but something which lives at the edge of stability and always looks out for new states in which it might escape, is the more interesting part of the brain. This is one reason why I don’t like DCM at this first glance.

This simple model for neuronal bulk activity is complicated by the experiments which are carried out typically, that is fMRI and M/EEG. For fMRI, the neuronal activity x is not measured directly, but via the haemodynamics, which is described by the so-called balloon-model, embracing the dependence between neuronal activity, vasodilitation, blood flow, deoxyhemoglobin content and the process of measuring the latter one with fMRI. Sounds like an explosion of parameters. Sure, there might be calibration experiments for these parameters. But when reading a paper, there a many things you have to simply trust.

As a next step, some models are evaluated based on Bayesian methods. This means, you have maybe two or more models with largely unknown coupling parameters ,

and

and try to find out which model fits best – which seems to be an impossible task, given the parameter space. BMS (Bayesian model selection) is an interesting method which tries to overcome this problem; it compares two models not only by considering how well data are described but also the model complexity (number of parameters). Let’s assume, this step is perfect and without flaw. What do we get now?

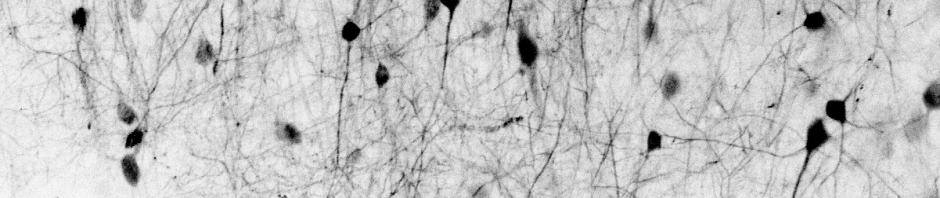

Then we have the information that model A is better than model B, and model B better than model C in describing the data. This is an interesting piece of information if – and only if – the model itself is interesting. Personally, I believe that only models on the neuronal level will help to gain a better understanding of how the brain works. If, however, DCM is described on the single cell-level, with being the single neuronal activity, a simple linear differential equation is a very bad description of real dynamics. Linear dynamics renders impossible what is most interesting in neuronal networks: instabilities, bifurcations, living next to chaos. On the other hand, if the model is enhanced in order to include non-linearities, there is no hope of making sense of the high-dimensional parameter space. If you do not agree with my considerations, do not hesitate to let me know. I’m no expert in information theory yet, so maybe I’m overseeing some benefits of the approach.

Anyway, I think that the Bayesian choice of the model would be worth another closer look.