The neuroskeptic blog recently mentioned a viewpoint paper which includes a list of the solved and unsolved problems of neuroscience. I’m probably not yet as deep into neuroscience as is the author of the paper, but I find it tempting to sharpen my own mind by commenting on his list. He categorizes the problems into ones that are solved or soon will be (A), those that we should be able to solve in the next 50 years (B), those which can be solved in principle “but who knows when” (C) and those problems that we might never solve (D), and metaquestions (E). I’ll pick only some of the list items. Here are three items which are actually one topic:

How can we image a live brain of 100,000 neurons at cellular and millisecond resolution? (A=solved)

How can we image a live mouse brain at cellular and millisecond resolution? (B=solved in 50 years)

How can we image a live human brain at cellular and millisecond resolution? (C=can be solved in principle)

As an example for the solved problem of imaging a whole living brain at millisecond resolution, the author cites the zebrafish-light sheet-paper by Ahrens et al. (2013). Several things should be noted: This is not a real grown-up fish, but a larva only few days old, which in addition to that holds the big advantage of being almost transparent and immobilized. Also, the paper states that rather ~80% than 100% of the cells can be imaged. This makes a difference. And the temporal resolution is not on the millisecond timescale, but in the range of 1 Hz for volumetric imaging. Let’s not be over-optimistic concerning the present state of technology … Maybe at some point, optics will become more advanced, being able to penetrate deeper into tissue using wave-front shaping. And spatially more complex detector systems might be able to make more sense of fluorescent lights emitted in all directions in some decades. But scattering is a hard problem, and if somebody sees a future where we can image a whole mouse brain with light, I would say: No way! But let’s be more optimistic and speculative. Imagine a genetically encoded voltage sensor that is not fluorescent, but emits photons itself [update 2015-06-19: maybe a brighter and faster version of this ‘nano-lantern’] in linear function of the membrane potential of the neuron in which it resides, directly converting electrical field changes into infrared photon rates. Therefore, we have no excitation problem any more, but only a detection problem. This is an inverse problem and therefore difficult, but an array of some millions of photodetectors arranged in a helmet around the human skull that can detect single photons on a close-to-femtosecond timescale might be sufficient to allow a good guess of the activity of almost all neurons in the human brain. Maybe it would be necessary to add, additionally to the detector helmet, some miniaturized detectors into the brain. Alternatively, a genius finds both a voltage-coding protein that emits neutrinos based on the neuron’s activity, and a revolutionary small and sensitive neutrino-detector. Emitted neutrinos (or maybe someone invents another interesting particle that does not interact so often with brain matter, but with detectors) can travel undisturbed through the head and hit the detectors which cover the whole room in which the human being freely moves … Now, if we only consider all 80e9 single cells as units with a temporal resolution of 1 ms, this generates a data volume of 153600 terabyte of data for one minute of recording (8 bits resolution as a minimum to capture the whole dynamic range of membrane voltages); the intermediate processed data would be much bigger, though. And the need for dendritic imaging would increase this amount by two or three orders of magnitude. I hope this shows how difficult the data acquisition problem alone is. Not much reason to be optimistic. It is ok to set yourself difficult goals, but this goal is not a well-chosen one. The fact that we do not understand simple 500-cell recordings does not necessarily mean that we have to increase the number of recorded cells, but that we also have make more sense of what we have.

What is the connectome of a small nervous system, like that of Caenorhabitis elegans (300 neurons)? (A)

What is the complete connectome of the mouse brain (70,000,000 neurons)? (B)

What is the complete connectome of the human brain (80,000,000,000 neurons)? (C)

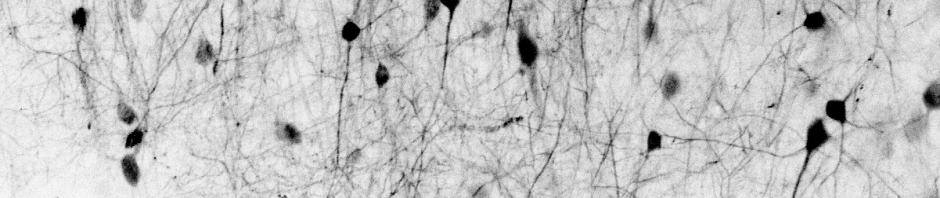

These goals are, compared to the ones above, much more accessible. As my own lab is also involved in reconstructions of neuronal networks using serial block phase electron microscopy (see this preview paper), I often encounter -via my collegues- the technical challenges seen by the connectomics research groups of Winfried Denk, Jeff Lichtman and others. For these methods, a brain is cut into 20-30 nm slices. Every slice is imaged with 5-10 nm lateral resolution using an electron microscope. If you have enough time (some years maybe) and if you have developed an algorithm that automatically detects neuron boundaries and synapses in these data sets, the connectome of a human brain would definitively be at grasp in 50 or 100 years. I would consider this realistic. I’m looking forward to seeing those results, although this will take still some decades. At the same time, this will drive connectomics to really ‘big science’, because of the need for parallelization, high computational power, long time-scales (several years or decades) and expensive computers and microscopes.

How do single neurons compute? (A)

How do circuits of neurons compute? (B)

How does the mouse brain compute? (C)

How does the human brain compute? (D)

Is the problem of how single neurons compute already solved? I don’t think so. I do not believe that even the kind of computation carried out by different neurons is the same. Maybe some classic model axons or dendrites are nothing but actively and passively conducting cables, respectively. But if I were nature, I would rather increase complexity by random modifications like voltage-gated channels in dendrites and channels with a huge range of different time constants that supply all kinds of channel-intrinsic memory of the neuron’s history. This is something which might even be missed by voltage-sensing dyes in dendritic trees of neurons recorded in vivo : First, classic light microscopy cannot resolve the full dendritic trees. Second, the channel kinetics are one step deeper in hierarchy than the membrane potential description, like the membrane potential is one step deeper than the calcium concentration description used for calcium imaging. In theory, possibilities like dendritic shunting are well-known, but I haven’t seen very convincing publications which demonstrate both dendritic shunting and its use for this special neuron – just one example of not well-understood computations in single neurons. On the other hand, I find it less unrealistic to find out how circuits of neurons compute – if you consider a computation as the relation of the input into a circuit to the resulting output, and if you assume that the binarized activity of neurons (spike or non-spike) is a sufficient description of a neuron’s output. These computations could be normalization, decorrelation, pattern completion, recall, association, learning, etc. (Note that maybe I’m biased, because these things are the main focus of my current lab.) All this is what is often termed ‘circuit neuroscience’, and it is usually carried out without fully understanding the computations in single neurons (unless they turn out to be really strange, like dendritic spikes in layer 1 of the cortex, or direction-tuned dendrites in the retina; but maybe everything would be so strange if only we could look closer at it). Beyond circuit neuroscience, the computations in a living brain are possibly more difficult to understand. (Btw, I would guess that understanding the computations carried out by a mouse brain would be sufficient to understand the computations done by a human brain; I can’t see, based on what I know, a qualitative difference here.) In the end, it’s all about grasping the neuronal events that underlie simple thoughts as we experience them all day. Sigmund Freud once wrote of the break-through event when he first managed to pin down the content of a dream of himself or of one of his patients (I do not remember) to the underlying causes and events in real life in a satisfying way. Likewise, if it will be possible to track down a simple thought to a description in terms of neuronal activities in a satisfying way, I would say that we understand a computation in the brain. It would be worthwile to spend more time on elaborating how such a description might look like.

This is a really nice and concise critique of the original ideas.

I agree completely that we shouldn’t expect qualitative differences past a certain level of brain development (namely from mouse to human) but it does provoke interesting questions regarding how do our brains allow us the experiences we have and not those of a mouse? Will it be just shear amount of neuronal investment in particularly processes or perhaps something to do with absolute interconnectedness (i.e. perhaps awareness/consciousness and similar are grounded in degrees of connections on top of the same basic processing abilities). I’m pretty much just ad lib-ing here.

I like the idea of a helmet to map activity but how in that paradigm would we determine the origin of the signal? That would be very difficult I’d assume.

Brain, mind, and behaviour truly are some of the most complicated, beautiful, and interesting phenomena and I hope humanity will one day advance much further than where it is now because we really are not much further than Galileo was looking at stars knowing they were important but not exactly understanding why.

Again, well done and thank you for the read :)

A different approach to understanding the limitations of ‘imaging the whole human brain’ is not to upscale single-neuron techniques, but to look whether existing whole-brain imaging techniques could be improved to reach single-cell resolution. The first techniques that come to my mind are PET and SPECT on the one side (non-scanning methods), and fMRI on the other hand (the newspaper’s ‘brainscanner’, therefore a scanning method).

The spatial resolution of fMRI is around 1-5 mm according to wikipedia; the temporal resolution is unclear, as the BOLD signal observed is slow anyway (timescale of seconds) and therefore not a limitation.

SPECT has a very bad spatial resolution (1 cm according to wiki). PET, however, is an interesting technique. In a review (http://www.ncbi.nlm.nih.gov/pmc/articles/PMC3144741/), both technical and rather fundamental physical limitations of PET are described, and it comes to something like 2 mm for human brain imaging – even if any technical limitations like detector sizes would be overcome. These fundamental limitations have to do with the non-zero temperature in the environment of the positron that is used for generating two (gamma) photons of (almost) opposite propagation direction. I suppose that any mechanism that is used for a similar process will have similar issues and similar resolution limits.

Whereas PET cannot resolve 1 mm³, single-cell imaging is not yet able to cover at least a fraction of 1 mm³ at any typical temporal resolution – so big is the gap between whole-brain and single-brain imaging at this moment.

And one 1 mm is a lot! If a brain were only sparsely populated withneurons (say, one neuron covering a volume of (50 um)^3 ), this cubic millimeter would contain about 10’000 neurons. Not even close to single-neuron resolution –

Of course I do not have a answer to any of your questions …

The ‘helmet’ idea would be like this: First, you take a small camera on top of the head to monitor activity. To get more information, we put a second camera at the back of the head. Then another one to the left, to the right, and then to all spaces in-between. Voilà, there’s the helmet. Maybe a very big and heavy helmet, though ; ) Then, let’s miniaturize the cameras and put small lenses in front of them, such that each pixel gets a different piece of information. A concept I loosely had in mind was the principle of light field microscopy, which was used by my former lab in Vienna (among others) : http://www.nature.com/nmeth/journal/v11/n7/full/nmeth.2964.html. Although this is very difficult and requires computer clusters to reconstruct the movie of a tiny worm brain.

Of course it is totally unrealistic to do this with a big and scattering piece of tissue as a human head! I hope it was clear that the description of these ideas were not meant as a realistic outlook …